Search the Community

Showing results for tags 'Virtual machine'.

-

Newly installed Nvidia Quatro K2000 in a Dell R910 - hw transcoding

metalcated posted a topic in Linux

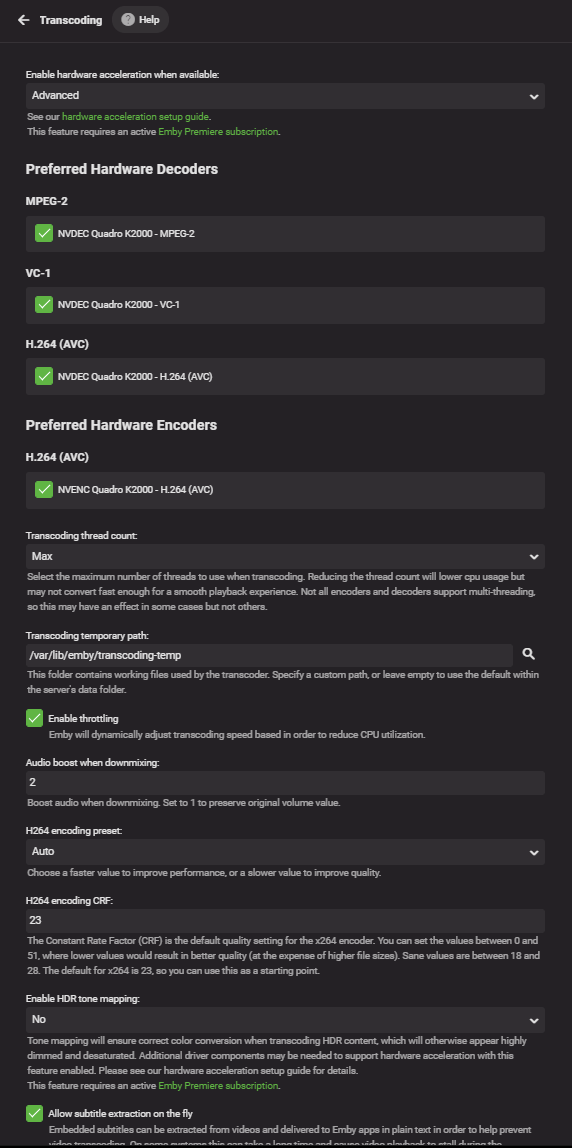

Newly installed Nvidia Quatro K2000 in a Dell R910 - hw transcoding I just installed a Quatro K2000 2G video card into my Dell R910 into a native16x PCI-e slot. I modified the heatsink and installed a large fan to help keep it cooler and make it fit into the server case. It's staying pretty cool. Now the reason I did this was for several reasons actually. Running on a VM is not always the best approach. Having hardware support should improve performance. I want 4K movies to not skip and stutter while watching them (transcoding - usually always audio) I recently bought an HD HomeRun and want better performance (similar to 4K issues). And this has been something I have been wanting to do for a while. I would rather do this than buy a Synology or QNAP or build a dedicated workstation. All that said... not much improved after installing the card. Maybe a slight improvement, but overall I am still not happy with the implementation. Video still stutters with 4K movies - unwatchable Live TV still stutters unless I pause it for 5 seconds, play again and then it's fine - I feel like this should still not happen. This used to not happen with my 4K movies even with transcoding enabled running on a VM in vCenter on the same server without a physical video card. I am not certain what happened 3-4 years back but before that point, 4K movies played perfectly. Too hard to try and understand what changed. All I want to do is understand how to fix this moving forward. I have several devices I can test this with: Web browsers Nvidia Shield Pro LG OLED TV LG LCD TV Android Phones iPhones iPad PC / MacBook All of them give me the same results. I can provide any details needed to narrow down where the issue is and if in the end this simply is not a good setup (running on a VM with passthrough) then I will buckle down and buy a Synology of QNAP or hell even build a dedicated media box out of some older hardware using the video cards I have here at home. (spare 1030 and 1080). Current specs: OS: Ubuntu 20.04.3 LTS CPU: 16 Memory: 32 GB Storage: SAS SSD Storage for Transcoding: SAS SSD Dedicated Audio/Video: Nvidia Quatro K2000 (2GB) hardware_detection-63769669756.txt ffmpeg-transcode-b53abc80-c465-4ef9-b1f9-3c82cd0a5a67_1.txt- 31 replies

-

- transcoding

- virtual machine

-

(and 1 more)

Tagged with:

-

Disk Activity/Latency when running Emby in a VM on Hyper-V

RedBaron164 posted a topic in General/Windows

I've been running Emby in a VM on Hyper-V for a couple of years now. Recently I've been trying to resolve some disk activity/latency issues that have been causing delays while loading and moving around in Emby from various clients. I think I've finally found my solution and wanted to share what I learned on the off chance it might help someone else. Long story short, move the cache/metadata/transcode-temp directory to a separate virtual hard disk (vhd). I noticed that when an Emby client would take a long time to load or start to timeout, disk activity on the server would jump noticeably. I also found that running the disk cleanup task would cause the same kind of usage. While monitoring the disk performance in task manager and resource monitor I would see that disk activity would max out, the disk queue length would be around .90 - 1.5 and average response time for the disk would hit 500ms. All this even though the disk throughput was only being reported at about 500kbps. I had two vhdx's on the VM, a Fixed size Disk for C: and another Fixed size disk for D: which is where shows are recorded. The first thing I did to try and improve the situation was reconfigure my Hyper-V's host storage as a Raid 10 array instead of Raid 5. This helped a little but not significantly and the issues were still present. I kept monitoring disk usage and noticed that the cache directory was being accessed rather frequently so I decided to move the Emby cache to a different disk. I added a third 10gb Fixed size disk and changed the cache path. This helped a little but I would still see performance issues when the Library.db was being accessed heavily. After looking into if I could move the Library.db to a different directory (and finding out it would be rather difficult) I noticed that the Metadata directory would show up frequently so I decided to move that instead. I moved the metadata location to the same drive as the cache and after refreshing the metadata in my libraries I finally noticed a significant improvement in performance. I also decided to move the transcoding-temp directory to the third drive as well just to cover all my bases. I originally did not think that moving the cache/metadata directories to a different virtual disk would make a difference since all the VHD's were on the same physical volume. But doing just that appears to have resolved my issue and now I regret not doing it sooner, I've been running performance tests since making the changes and so far I have not been able to re-produce my original performance issues with a variety of different clients including FireTV/Web/Theater. My Emby clients are much more responsive and not having to sit around and wait while browsing my music library is refreshing. And if I never see that VolleyError timeout message again I'll be very happy. I'm going to keep a close eye on my disk utilization and performance for the next few days but wanted to share what I was experiencing and what I did in case anyone else runs into the same issue. Also, on a side note, I did try enabling Quality of Service Management on the Virtual Disks but it ended up making the situation worse. I'm also not sure if this solution applies to only Hyper-V or also VMware. I'm not running VMware ESXi at home so I can't say for certain. But if you are running Emby in VMware and are having a similar issue then maybe this will help you as well.- 21 replies

-

- 1

-

-

- Hyper-V

- Virtual Machine

-

(and 7 more)

Tagged with:

-

Hello Is it possible to get H/W transcoding running on a ESXi virtual machine? I have just installed Emby on a Ubuntu 16.04 server VM, but it runs terrible when I stream to browser or kodi. I've tried updating ffmpeg to 3.4 and installing i965-va-driver, but when run vainfo I get this: error: can't connect to X server! error: failed to initialize display Aborted (core dumped) I don't have a dedicated graphic card, so it possible the use the servers Intel Core i7-3770 onboard on the vm? If so could anyone help me getting this running?